Diffusing Diffusion

bringing AI art to a computer near you

Recent advances in artificial intelligence allow us (humans) to turn text to image, including some very impressive artwork. Perhaps you've dabbled with DALL-E 2, Midjourney, or DreamStudio; and if so, I suspect you've pondered how to extend those precious credits. Well, it's surprising easy to setup on your home computer, and may run faster than you think. Enter: Easy Diffusion, the easiest way to install and use Stable Diffusion on your computer.

Stable Diffusion, perhaps the most versatile model for text-to-image generation, with it's myriad open-source tooling, was released to the public August 22nd, 2022, under the CreativeML OpenRAILM-License -- I am not a lawyer, but this license gives us individuals broad freedoms; just be careful with your creations.

On Windows, simply download & run the installer. Once complete, a browser tab should open to http://localhost:9000/ -- the default port. There are several options to play with, but the defaults should get you pretty far -- the rest of my discussion leaves all options alone.

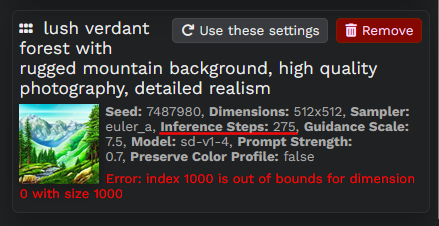

For context, my laptop has an NVidia GTX 1050 Ti with 4GB vRAM. In under a minute, this light gaming GPU (from 2016) can generate a 512x512 pixel image using the (default) sd-v1-4 model and 25 steps. With a little more time, it can handle 250 inference steps -- at 275, an index out of bounds exception is encountered.

Text to image

Image generation occurs in steps, with the AI "thinking" as it iteratively generates the next variant. The "inference steps" option controls how many occur before displaying a result. This process is succinctly explained by Stablecog:

Stable Diffusion starts with an image that consists of random noise. Then it continuously denoises this image over and over again to steer it to the direction of your prompt. Inference steps controls how many steps will be taken during this process. The higher the value, the more steps that are taken to produce the image (also more time).

To show how the number of steps influences a result let's try an example, and feel free to play along -- the same parameters should (🤞) produce the same result.

Prompt -> lush verdant forest with rugged mountain background, high quality photography, detailed realism

Seed -> 2107891525

Inference Steps -> +25 per iteration

See Writing Prompts in the stable-diffusion-ui documentation for advanced tips.

Image to image

Have you ever envisioned something grand, knowing you lacked the artistic ability to make that image a reality? With Stable Diffusion's img2img functionality, you might just "draw" knights from your very own stick figures. The process requires a fair bit of nudging, but the AI will add incredible detail -- Easy/Stable Diffusion also support an array of advanced features to adjust & iterate to your heart's content (see: /r/StableDiffusion's Dreamer's guide).

As an example, I drew a beautiful river winding through a mountainous valley...

And let the AI do it's thing...

After some work I gave up at...

The above work was all done with v1.4 of the Stable Diffusion model. And I realized this when I stumbled upon v2.1 from HuggingFace -- go setup an account & snag an API key. So here's the same initial "drawing" using the same seed & 25 inference steps, but using the sd-v2-1 model -- and running a UI with Azure (see below).

Giffing it

The above gifs were generated with PowerShell and ImageMagick -- similar to my U.S. Senate Relative Political Positions (1789-2021) post.

I first aligned each image in their own directory, with file names prefixed by order (e.g. 1-image.jpeg). Then comes the scripting...

# Get images in correct order

$imagesInOrder = Get-ChildItem . |

Select-Object -ExpandProperty Name |

ForEach-Object {

$_ -match "(\d*)" |Out-Null;

$number = [System.Int32]::Parse($Matches[1]);

$_ |Add-Member -Type NoteProperty -Name Number -Value $number -PassThru

} |Sort-Object -Property Number

# Add additional information to each image

$author = "Chris Parker`n(6/5/2023)"

$brand = "Stable Diffusion"

$title = "lush verdant forest`nwith rugged mountain background,`nhigh quality photography, detailed realism"

$citation = "seed: 2107891525"

$count = $imagesInOrder.Count

$labeledImages = New-Object System.Collections.Generic.List[PSCustomObject]

for ($i = 0; $i -lt $count; $i++) {

$image = $imagesInOrder[$i]

$imageFileName = $image.Substring(0, $image.IndexOf('.'))

$newFileName = "$($imageFileName)_labeled.jpeg"

$bufferedNumber = $count -gt 9 -and $i -lt 9 ? " $($i + 1)" : "$($i + 1)"

$fullBrand = "$brand (iteration $bufferedNumber/$count)"

& magick.exe convert $image -resize 512x652 -gravity south -background white -extent 512x652 $newFileName

& magick.exe convert $newFileName -fill black -pointsize 24 -gravity North -draw "text 0,20 '$title'" $newFileName

& magick.exe convert $newFileName -fill "#555555" -pointsize 16 -gravity North -draw "text 0,110 '$citation'" $newFileName

& magick.exe convert $newFileName -fill "#555555" -pointsize 12 -gravity NorthWest -draw "text 10,10 '$author'" $newFileName

& magick.exe convert $newFileName -fill "#555555" -pointsize 10 -gravity NorthWest -draw "text 10,115 '$fullBrand'" $newFileName

$labeledImages.Add($newFileName)

}

# Generate gif

$secondsPerFrame = 1

$delayValue = 100 * $secondsPerFrame

& magick.exe convert -delay $delayValue -loop 0 $labeledImages text-to-image_verdant-forest-mountains.gif

# Cleanup intermediate files

gci . -Filter *label* |Remove-Item

To the Cloud ☁

For those without a compatible GPU and/or looking to generate faster, it's possible to run with Microsoft Azure ML Compute -- I have not confirmed all costs associated with doing so (see: Azure pricing).

...but I gave it a shot anyway... because I'm fortunate to have a Visual Studio License that affords me a generous Azure allowance -- thank you Microsoft ✌.

While I intend to play with more powerful cards, I started with the modest Standard_NC6, which should run <$2 per hour. Given it's a "dedicated" instance, I intend to max it out within that hour.

As for the perks... with two more cores than my personal laptop (although a lot more RAM), it "appears" to generate a single image about half as fast. I suspect batch processing is where the benefits will really shine -- that, and not wearing down my personal laptop... 😉

The UI isn't quite as friendly (or powerful) as that by Easy Diffusion, but perhaps they can be swapped...?